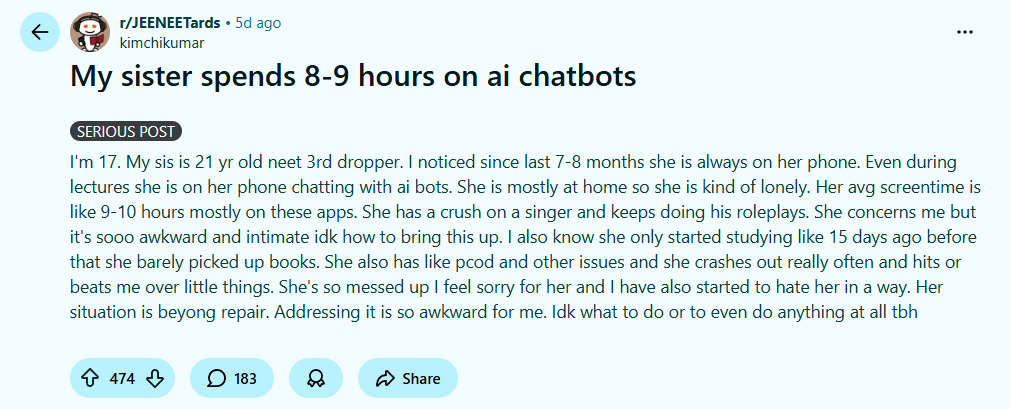

A 17-year-old boy posted online, worried about his 21-year-old sister. She’s spending 8 to 9 hours a day talking to an AI chatbot not just chatting, but roleplaying with it. The chatbot plays the role of her favorite singer. She talks to “him” for most of her waking hours.

Writing in Reddit’s r/JeeNEETards, her brother noticed other things weren’t going well in her life either. He was reaching out to strangers on the internet because he didn’t know what else to do.

Is it even “wrong”?

Here’s the uncomfortable part: it’s genuinely difficult to say.

AI companions have only existed for a couple of years. We don’t have decades of research, cautionary tales, or cultural consensus around what healthy AI use looks like. The rulebook hasn’t been written yet. So when her brother calls it worrying, he’s going on instinct and his instincts are probably right, but “probably” is doing a lot of work in that sentence.

What we can look at is the pattern itself.

“She has a crush on a singer and keeps doing his roleplays. She concerns me but it’s sooo awkward and intimate idk how to bring this up”, he wrote.

The parallel emotional world

Roleplaying with an AI that mimics your favorite celebrity, who is someone unattainable, someone who in this simulation chooses you, listens to you, responds only to you. It is not a casual hobby. It’s the construction of an alternate emotional world.

And the terrifying thing about well-designed AI is how real it feels. It remembers what you said. It responds in ways that feel warm, present, attuned. It never cancels plans. It never has a bad day that makes it short with you.

The line between “this is fun fiction” and “this is my relationship” doesn’t break dramatically. It blurs, slowly, over hundreds of hours of conversation.

Built to hook

Let’s not be naive about the design intent here either.

AI chatbots, especially companion-style ones are products. They are built to maximize engagement, which means they are built to feel indispensable. In that sense, they are no different from social media feeds, autoplay videos, or slot machines. The mechanism is different, but the goal is the same: to keep you coming back.

Eight to nine hours a day isn’t a quirk. It’s a product working exactly as designed.

“Even during lectures she is on her phone chatting with ai bots. She is mostly at home so she is kind of lonely. Her avg screentime is like 9-10 hours mostly on these apps.”, the user expressed.

The loneliness nobody’s talking about enough

But here’s what I keep circling back to: why is she there for 8-9 hours?

An AI chatbot is rarely the problem. It’s almost always the solution to a different, quieter problem. This seems to be the case of loneliness, social anxiety, a sense of not being understood, and a real-world life that feels harder to navigate than a simulated one.

The fact that her brother is worried, that things aren’t going well, that he felt he needed to post anonymously online. This points to a household where connection has already broken down somewhere. She isn’t choosing a chatbot over her family out of nowhere. Something led her there.

And she is not alone. This is increasingly the story of young people of today. Deeply online, digitally fluent, and profoundly lonely. The chatbot doesn’t create the loneliness. It just fills the space it finds.

So what do we do?

This is not a post with a clean answer. If you’re the brother in this story, the worst thing you can do is frame it as an intervention about “too much screen time.” That’s not what this is about.

This is about asking genuinely without agenda , “how are you actually doing?”

The chatbot isn’t listening to her for 8-9 hours a day. It’s just responding to her as designed.